The news outlet investigated these activities alongside researchers from the University of Massachusetts Amherst and Stanford University, and their findings were extremely unsettling. According to the report, “Instagram connects pedophiles and guides them to content sellers via recommendation systems that excel at linking those who share niche interests, the Journal and the academic researchers found.”

The platform also makes it easy for people to search using hashtags that can help them find this type of illicit material, including everything from videos and images to meetings in person.

According to the report, after setting up test accounts and viewing a single account that was part of a pedophile network, one researcher was inundated with recommendations from those peddling this type of content.

The report noted: “Following just a handful of these recommendations was enough to flood a test account with content that sexualizes children.”

They found more than 400 sellers of “self-generated child sex material,” which means accounts that claim to be run by the children involved. Some say they are as young as 12, and many use overtly sexual handles that make no attempt to hide what they are trying to accomplish. They found that these accounts had thousands of unique followers. And it’s not just accounts that are actively selling and buying this type of content that are so concerning; many of the associated users also discuss ways to access minors.

The Wall Street Journal reported: "Current and former Meta employees who have worked on Instagram child-safety initiatives estimate the number of accounts that exist primarily to follow such content is in the high hundreds of thousands, if not millions.”

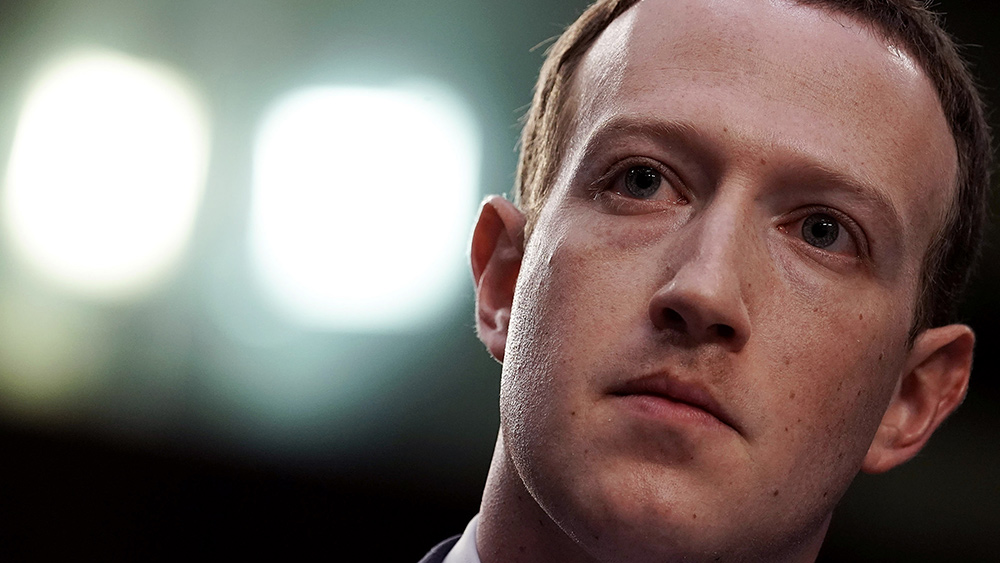

Although Instagram’s owner, Meta, has said they try to remove such users – closing nearly half a million of these accounts in January for violating their child safety policy – Meta is finding enforcement more challenging than other social media platforms are due to design features that actually promote the content as well as weak enforcement.

In fact, the algorithms used by Instagram are so influential that even a quick glance at an account connected to the pedophile community can prompt the social media platform to start suggesting users join it.

Former Meta Chief Security Officer and current Stanford Internet Observatory Director Alex Stamos said: “That a team of three academics with limited access could find such a huge network should set off alarms at Meta. I hope the company reinvests in human investigators.”

Instagram has the power to stop this – so why aren't they doing anything?

The Wall Street Journal also pointed out that Instagram allows people to search for terms related to pedophilia despite knowing that searchers could be looking for illegal material. It notes that users searching for certain related terms will be presented with a pop-up screen warning them that their results could “contain images of child sexual abuse” and that consuming and producing this material can cause children “extreme harm.” However, users can then opt to see the results anyway.

Meta has suppressed account networks it deemed dangerous in the past, and many experts are wondering they aren’t doing more to prevent child exploitation. After all, they were all too willing to suppress accounts connected to the January 6, 2021, events, so it stands to reason that they could take similar action against pedophilic content as well.

Unfortunately, they do not appear to be as quick to protect children and put a stop to this behavior as they are to suppress political stances they don’t agree with.

Sources for this article include:

Please contact us for more information.